Camera-Free Finger Tracking For Real-World Input

Detailed project page

Problem Definition

Traditional media and interaction controls are awkward in motion-heavy environments, and vision-based tracking depends on lighting and clear line of sight. Existing magnetic approaches prove the sensing concept, but they tend to be bulky, interference-prone, or hard to access as a compact developer platform.

Problem Solution

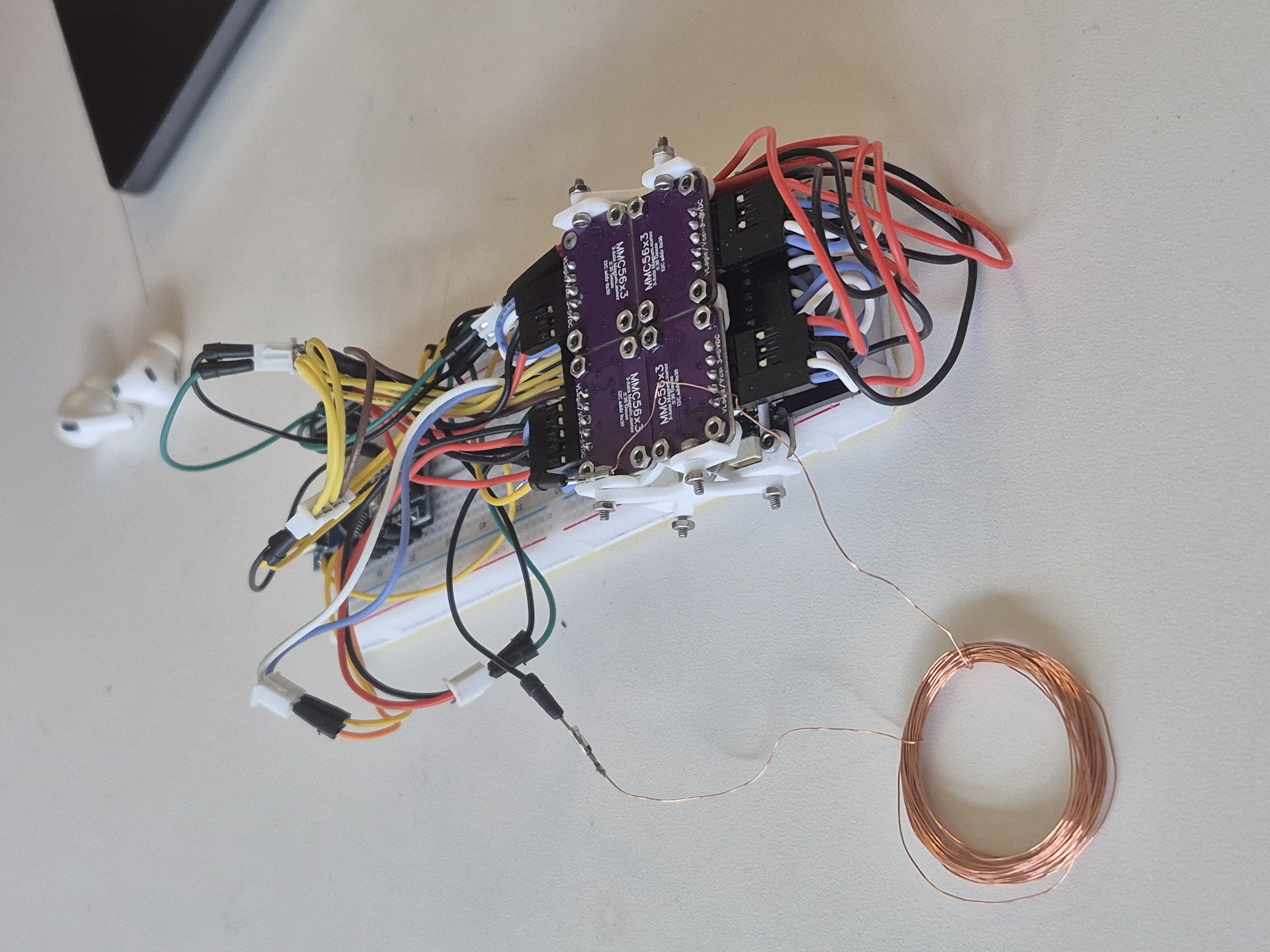

GestureLink uses a pulsed electromagnetic ring and an array of eight tri-axial magnetometers mounted near the wrist to estimate finger pose without cameras. The prototype separates the ring signal from background fields, routes multi-sensor data through a compact acquisition stack, and pushes the processed output into a live viewer for real-time interaction experiments.

Where This Fits

Why Magnetic

No line-of-sight requirement means the system can keep working in poor lighting, partial occlusion, and movement-heavy conditions where camera tracking is less dependable.

Why Wearable

The goal was not a lab-only tracking rig. The team pushed toward a small-form-factor input device that could plausibly live on the body and feel useful outside a desk setup.

Why It Matters

This creates a path toward VR/AR finger tracking, slide control, hands-free media input for skiing or biking, and a more general developer-friendly gesture platform.

Prototype Media

System Architecture

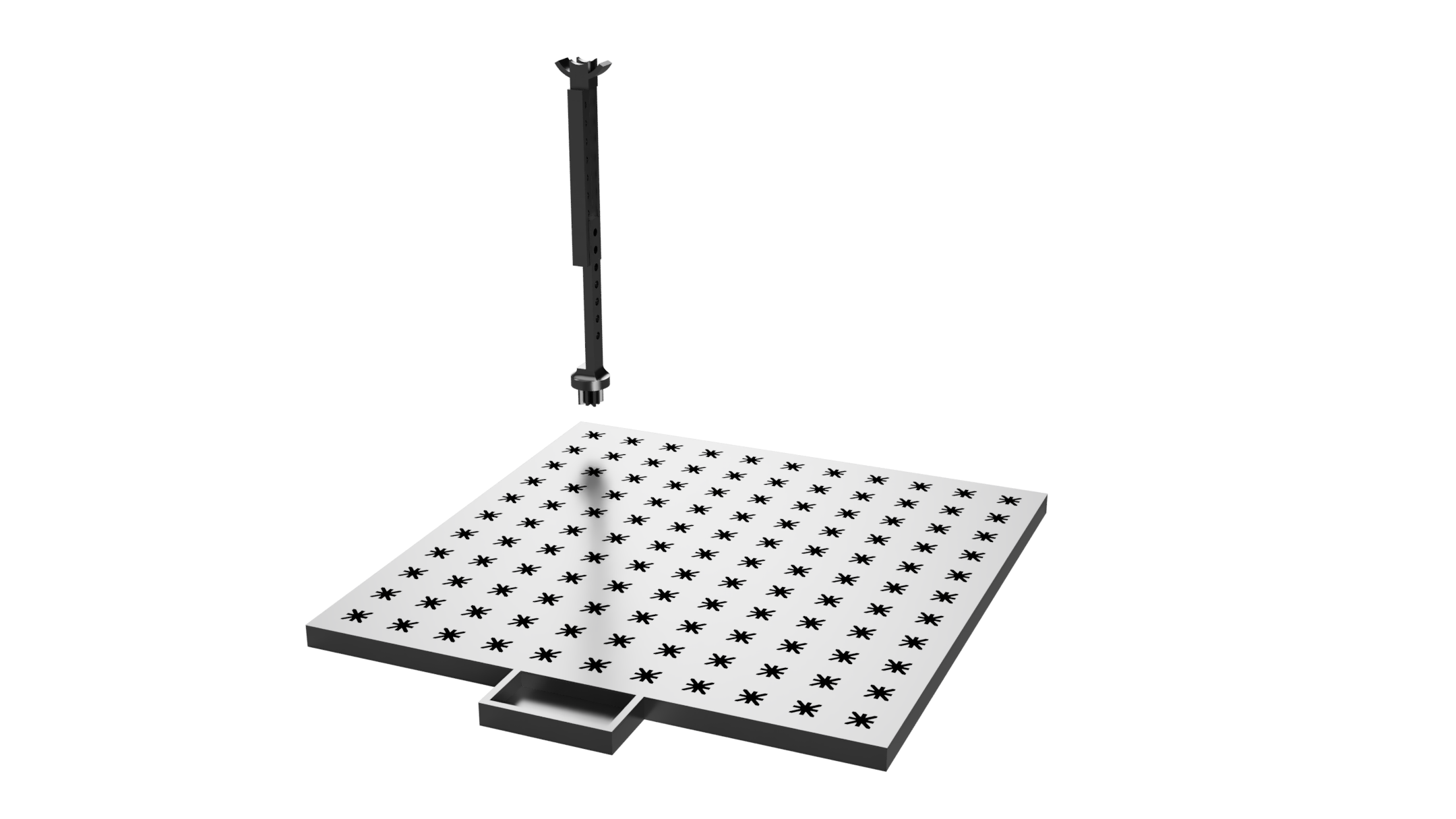

Field Generation

A pulsed ring coil creates a controlled magnetic field signature that is easier to distinguish from background magnetic noise than a passive magnet alone.

- Pulsing improves signal discrimination.

- The architecture leaves room for tracking multiple rings later.

- Low current requirements keep wearable power realistic.

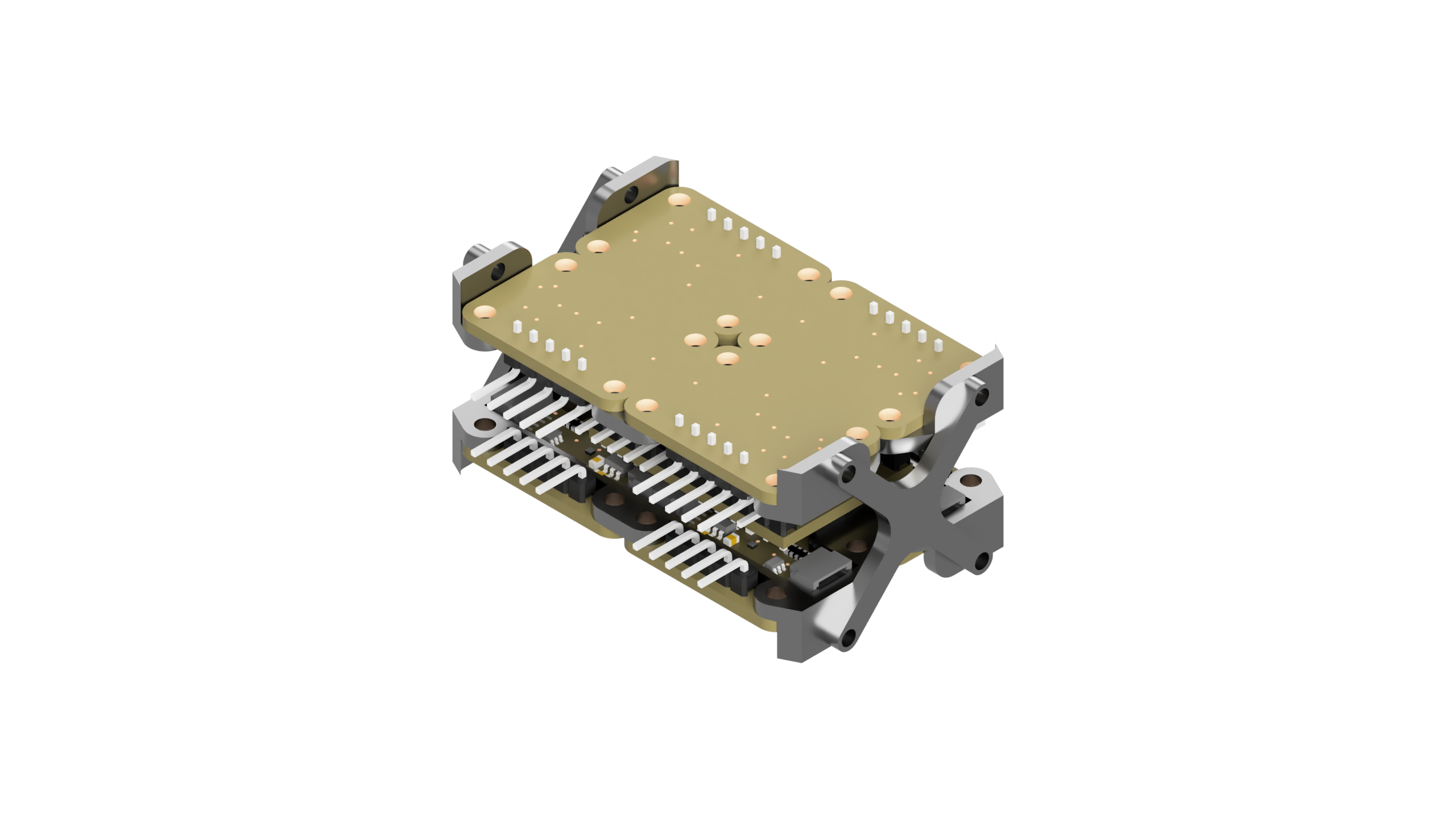

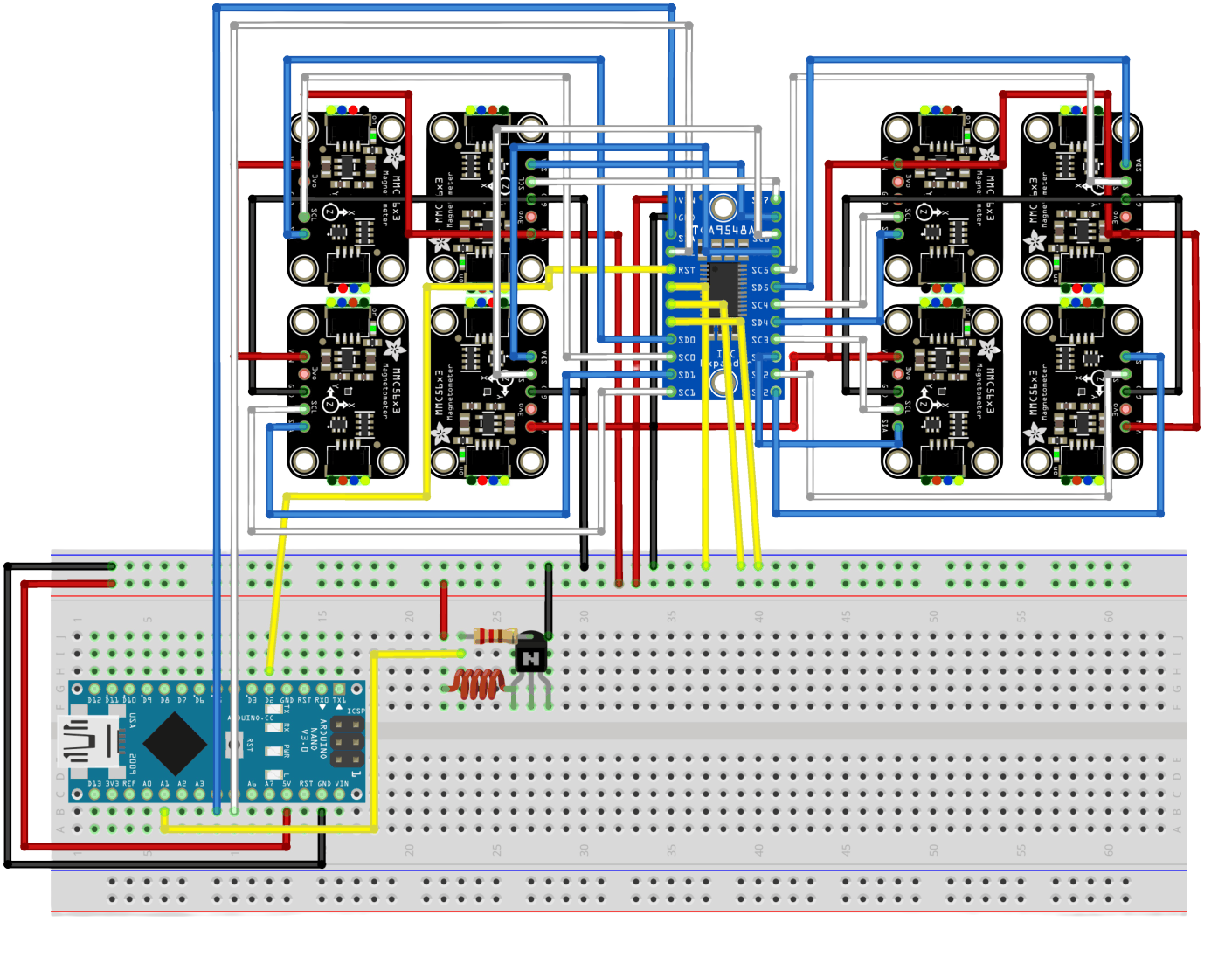

Sensor Stack

Eight tri-axial magnetometers are arranged in a layered 4-top / 4-bottom layout near the wrist to improve spatial sensitivity and reconstruct the field more reliably in 3D.

- Layered geometry improves observability.

- A serial multiplexer enables many sensors on limited pins.

- An Arduino Nano coordinates acquisition and coil control.

Processing Pipeline

After acquisition, the signal is filtered in software and pushed through a lightweight pose-estimation stage before being displayed in a live viewer.

- Goertzel-based filtering isolates the pulsed response.

- A small neural network was used for position estimation.

- The viewer made rapid demo iteration and debugging easier.

Validation and Results

The team compared simulated and measured magnetic field values after global alignment to check whether the simplified field model still described real hardware behavior well enough to be useful.

Pearson Correlation

r = 0.834

Strong linear agreement between simulated and measured field values.

Spearman Correlation

rho = 0.875

Strong trend agreement even when absolute values drifted.

Normalized Error

NRMSE = 0.327

Moderate absolute error, but enough relative fidelity to validate the approach.

Geometry Finding

Simulation suggested the best performance window was around a 10-12 mm ring radius.

Pulse Finding

Lower pulse rates in the 1-3 Hz range behaved better than faster pulsing in the current prototype.

Main Limitation

Performance was limited more by observability, sampling, and data quality than by electrical resonance.

Poster and Next Steps

The current prototype demonstrated that magnetic field-based gesture tracking is feasible in a wearable form factor, but it is still early-stage hardware. The next iteration should focus on strengthening the weak axes, increasing sampling reliability, and turning raw pose estimates into useful gestures.

- Extend tracking from a strong single direction toward fuller 3D pose estimation.

- Add multi-ring support so multiple fingers can be tracked in parallel.

- Improve stability, calibration, and sampling reliability across cycles.

- Explore a three-coil architecture to balance observability across axes.

- Add a gesture-recognition layer for media, slide, and XR interaction tasks.